AI Code Assistants Compared: Copilot vs ChatGPT vs Claude

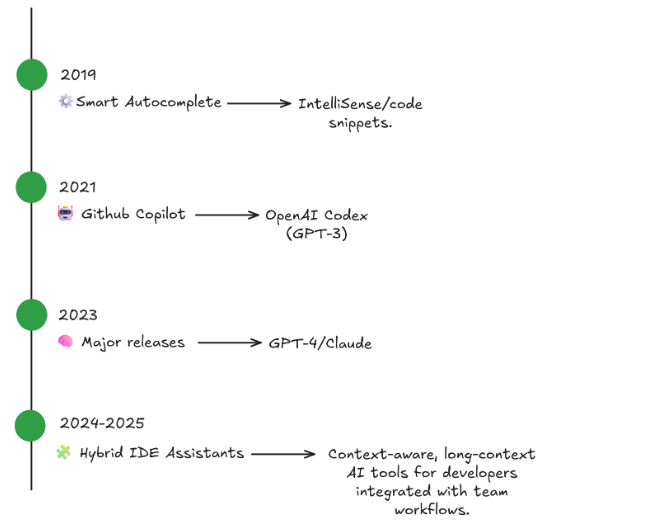

Back in time, autocomplete tools for developers in IDEs were little more than a way to save keystrokes. They provided suggested words based on the existing code and snippets for boilerplate code.

Today, such tools have evolved into full-fledged programming assistants that can perform a variety of tasks, such as reading, writing, and even thinking about code. For both developers and engineering managers, these AI coding tools promise a jump in productivity—but not all of them work in the same way.

In this post, we’re going to compare AI assistants in order to highlight their benefits and frailties and determine when and where they are useful for daily AI-assisted development. In particular, our focus will be on three of the most popular tools existing today:

- Github Copilot

- ChatGPT for code

- Claude for developers

However, our aim is not to find one assistant who will win this contest, but to find the best AI code assistant for a particular workflow.

The Rise of Code Assistants

Large Language Models (LLMs) have gained a lot of popularity across different fields, mainly because of their ability to interact with humans through natural language conversations.

Following this trend, AI tools for developers can use their knowledge to translate human intents to code languages, debug, and approve pull requests. With the advent of autocomplete models based on GPT-3 and Copilot from GitHub, such models have become a lot more popular. The current models- GPT-5, Claude Sonnet 4.5, and others- work under the same paradigm, but they have expanded that logic far beyond.

Thanks to these models, it’s now possible to interact not only through keystrokes but also through conversation. Instead of “typing until it works,” teams can now describe intent and let the assistant generate structure, style, and documentation.

GitHub Copilot

We’ll start with our first assistant, GitHub Copilot, which quietly revolutionized how millions of engineers do their work. First supported by OpenAI Codex and currently by GPT-5 mini, GitHub Copilot natively integrates inside popular code tools such as JetBrains IDEA, VS Code, and even Neovim. Also, other models are available through the user’s settings.

Some of the main features of Copilot are the following:

- It autocompletes code on the fly.

- It works on its own, with no explicit prompts required.

- It is most comfortable for repetitive logic and utility generation.

- It is especially useful in typed languages (Go, Typescript, etc.)

For example, here is a line that gets entirely implemented by Copilot in TypeScript:

// User types:

function formatDate(date) {

// Copilot suggests:

return date.toISOString().split('T')[0];

}If we have to point out some Copilot’s limitations, it’s worth mentioning that it has a limited context to the current file or open tabs and fails to “reason” across modules or architectures. Also, it gives a minimal explanation for why it generated a snippet.

Nevertheless, Copilot actually allows the user to open a chat window, which enables the use of extra features such as the “Ask”, “Agent”, and “Edit” modes. In this case, you can overcome the limitations stated above by playing with the model outside of the file itself (i.e., without autocompletion).

From an AI-assisted developer’s point of view, GitHub Copilot shines in micro-productivity by reducing keystrokes and repetition, and improving the use of boilerplate. On the other hand, it’s not useful for bugfixing or thinking about the designs of complex systems that exceed the scope of a single file.

Manager Insight: GitHub Octoverse 2025 Report states that artificial intelligence solutions for software development was key to reaching productivity record levels during the current year.

ChatGPT for coding

Rather than being an autocompletion tool inside your IDE, ChatGPT allows your team to work in a broad conversational space. Based on GPT-5, ChatGPT for coding does more than provide inline suggestions; it’s a teaching, debugging, and exploration partner.

As it runs inside a full chat window, you can use it for explaining legacy code, debugging stack traces, fixing errors, creating documentation files, and coordinating or brainstorming architectural patterns just before moving on with their implementation.

ChatGPT’s context is not limited to the current file or the open tabs. Actually, it can handle entire files or frameworks for any task, which makes this tool very practical for debugging and teaching. Although the chat window is managed outside IDEs, there is an official extension that can be used for integration inside your workspace.

Despite the pros mentioned above, it’s important to note that sometimes the response could be wordy or too simple for real complexity. Because of that, good prompt engineering skills may be needed to improve the model’s responses. However, ChatGPT provides a good training environment for ramping up new hires or helping your cross-skilled team with a completely unfamiliar tech stack.

Claude for Developers

The last tool we’re going to cover is the last one to become available for use. Despite that, it surprised people mainly due to its large context window. In fact, Claude’s model can handle up to 200,000 tokens (even more in some cases), which makes this model very useful to dive into entire repositories or lengthy documentation files in one go.

Thanks to Claude’s ability to read and reason across multiple files, it outputs balanced and straightforward responses with less filler text. It is also well recognized for “understanding intent” in huge codebases.

With a single prompt such as: “Review this pull request and list any redundant logic or unnecessary updates.”, Claude sums up the result, points out which conditions are duplicated, and sends refactoring points back —in some ways, it transpires the code-review process.

To mention some of Claude’s limitations, it’s worth saying that its performance could decrease while handling huge projects. Also, it lacks fine-grained code execution, preventing it from doing quick tests. Nonetheless, its long-context knowledge improves documentation, minimizes possible merge errors, and creates a standard for style guides, which makes Claude a great assistant for your daily work.

Productivity

The use of AI code assistants by developers is not only a trend; it actually improves the way of work. Independent studies and surveys such as GitHub Octoverse 2025 and Stack Overflow Pulse have shown that developers report an improvement in their productivity thanks to the use of AI tools.

But not all the models perform the same way. In fact, several benchmarks compare models’ performance in order to create dashboards. For example, MArena shows GPT-5 in first place for web-related tasks, having Claude Opus 4.1 in the second place. The same results are observed in DesignArena.

One of the big concerns of developers is how easy it is for them to integrate the AI tool into the working environment. On this topic, Copilot offers deep integration in IDEs, while ChatGPT and Claude give you the possibility to use them through the web, by installing an IDE extension, and even through their APIs.

To sum up, GitHub Copilot is a good fit for rapid coding; ChatGPT excels at debugging and mentoring, and Claude does a great job in refactoring and reviewing code.

Also, there is a trend when companies hire software developers with AI-assisted development know-how.

Cost

Copilot subscription starts at $10 for individuals and $19 for businesses. ChatGPT Plus tier costs $20, whereas the business plan costs $25 per seat. Claude’s Pro subscription starts at $17 for individuals, and each seat of the enterprise plan starts at $25.

The different price policies may affect adoption by some developers or companies based on their budget. When analyzing costs is also important to consider the ROI that the use of each assistant could provide.

Privacy

The use of an AI programming assistant involves sharing information that could be valuable for you or your company: a piece of code, business rules, proprietary information, etc. To prevent any security risk or data breach, it’s always important to know that the provided information could be used for the models or the companies owning them.

Depending on the provider, such use may differ. As a general rule, the three tools under analysis have different policies for individuals and bussiness plans. For example, GitHub Copilot collects code snippets that are used for product analysis, except in the case of private repositories from users under Copilot Business or Enterprise plans.

On the other hand, OpenAI uses the information from individuals in order to train its models, but the user always has the possibility to opt out in order to stop such collection. This choice does not include the information received through explicit feedback (i.e., by using the thumbs up / thumbs down buttons), which will always be collected and used for the model’s improvement. Moreover, if you use temporary chats, the conversation will not be stored in memory or used for training the models.

In case of business plans, OpenAI will only use the information if the user has explicitly agreed to it.

Claude ́s policies are based on the privacy and secutiry of the data, which implies that users may allow the model to use their data. This rule does not apply to information shared by providing explicit feedback to models ́ responses, nor in the case that the conversation is marked for safety review. On the other hand, commercial users need to agree to participate in the Development Partner Program.

Finally, if you’re seeking to avoid disclosing data to any third party, you should consider a custom deployment by using open-source AI tools on-premises that offer free labor hours, are installable, and can be hosted locally. Generative AI development services combine these models with private cloud assets to retain full control of their data.

Same Task, Three Assistants

Let’s compare the differences across the three tools we’re exploring with a quick example. Assume you’re working in a small team that needs to develop a Node.js API with pagination. You can use the three assistants we’re discovering in this post, so you provide each one of them with exactly the same prompt:

Write an Express route that should return paginated results from a database collection.

GitHub Copilot will probably be the first one to respond. It will start typing in the current file inside your IDE, providing the code snippet that is needed in order to have an operational endpoint that gets the data from the database and returns it to the client. If needed, Copilot will leave some useful comments in the code so you can better understand how the code works. No additional output or explanation will be given.

On the other hand, ChatGPT will spend some time thinking about the inquiry. After that, it will start by explaining what an API is and how it works, followed by some strategies and best practices commonly used by the community. Then, it will elaborate on a piece of code that implements the required task, concluding with an example that explains how this new route should be used from any frontend application.

Finally, Claude will read the prompt and perform a deep analysis of the requirement. Based on that, it will return a full working example, pointing out relevant insights such as performance issues, potential trade-offs, and best practices.

If you have to think about this example from a developer ́s perspective, you can say that GitHub Copilot provides the best speed of implementation; ChatGPT teaches and provides grounds and foundational knowledge, and Claude gives an enhanced result that ensures high quality.

A Few Takeaways

In order to make the most of the AI coding tools, this is what you have to know:

- Learn as you go. Roll out one assistant for one team; see how that goes, and then change things based on results.

- Set boundaries. While AI implements repetitive code, documents things, or generates tests, humans should still manage some things.

- Get everyone up to speed. Offer quick workshops on how to write good prompts and understand what can be done with AI.

- Keep your data safe. Don’t forget to censor sensitive data out of external services.

- Keep improving. Treat AI integration like any other experiment: check what’s working, modify it, and try again.

Several generative AI consulting companies are following this approach to conduct their business.

Wrap up

Choosing the best AI code assistant may differ depending on the team size, the scale of the project, and some other variables.

If you’re a solo developer or a freelancer, try GitHub Copilot. It offers instant completions and lightweight integration, which can also be improved by using the chat window. For startups or enterprise engineering groups, ChatGPT and/or Claude are both a good fit. Both of them provide long-context reasoning, documentation, and review automation, as well as versatile debugging and design exploration.

Privacy-sensitive organizations should consider a private LLM deployment in order to retain full control via an open-source or tailored model.

Before taking a final decision, it’s always a good idea to perform individual assessments of each tool in order to evaluate different metrics such as the average time from a bug being reported to its resolution, the turnaround for reviews, and the completeness of the documentation.

So, instead of trying to choose between Copilot vs ChatGPT, or ChatGPT vs Claude, what you should do is use each for what it does best. If you need a super-fast coding assistant, just use Copilot. It will help you churn out code as quickly as you can imagine it. If you need clarity about some complex ideas, ChatGPT is indeed your really patient tutor. Finally, Claude will carefully review everything and point out a way to make things even better or catch any sneaky errors before shipping.